Hosting your applications in the cloud yields many benefits, however it can be at times a very scary place when thing’s go wrong, and they always do.

If you love your customers then you owe it to them to make sure you have in place redundancy and ensure you provide a high level of availability.

The Amazon EC2 Infrastructure provides a really easy way to set this up using the Elastic Load Balancing feature.

Before starting you need the basic concepts of how the EC2 infrastructure works and how it can help you in your quest for high availability.

Regions

Regions in EC2 are geographical locations where the Data Centres reside and at the time of writing there are four Regions:

- US East – North Virginia

- US West – California

- EU – Ireland

- APAC – Singapore

It goes without saying that the Region you select should be always be as close to your customers as possible.

Availability Zones

Each Region has 2 or more Availability Zones. These are important to understand as they are independent from each other in that a failure of one zone does not affect the other.

Network Diagram

This is a fairly typical approach to load balancing a web application but it’s important to note that the level of redundancy is achieved by putting different instances in different Availability Zones.

The key thing to note here is that the instances you load balance should be in separate Availability Zones.

This post will be covering the Load Balancing aspect as I will leave the database redundancy to another post.

Creating The Load Balancer

Creating the Load Balancer is easy using the AWS Console

Under Networking & Security > Load Balancers you can “Create Load Balancer”.

This should be fairly self explanatory.

First we are assigning a name to our Load Balancer and then we are setting up the Ports the Load Balancer should listen on and forward to.

If you want to use HTTPS then you will need to do Port Forwarding as show in the highlighted image.

Configure Health Check

Next you need to setup your Health Check, this is how the Load Balancer decides if your Instance can have requests forwarded to it.

You can do this by setting the Ping Path which is an HTTP resource that is polled at an interval you define.

If it returns an HTTP result code other than 200 it will cause your instance to be deemed unhealthy and subsequently removed from the Load Balancer.

The Ping Path can be a static HTML resource, however it’s a good idea to run other any system checks and so I like to use a .ASPX file with Code-Behind instead.

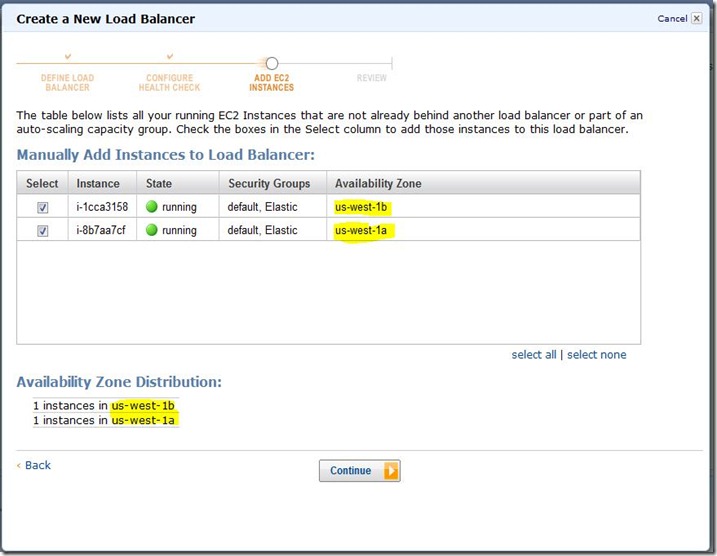

Adding Instances

Here you can select Instances from all Availability Zones in your Region, as I mentioned before you should select Instances in different Availability Zones to provide a higher level of availability. Although you can have a single instance, it goes without saying you should have at least two.

DNS

When a load balancer is created it automatically gets assigned a DNS Name. To point your website address to your load balancer all you have to do is create a CNAME record for it.

Managing The Load Balancer

Now that you have setup your load balancer you can add more instances easily and get visibility of the Health of each instances by selecting the Load Balancer in the AWS Console.

Healthy

Unhealthy

And this is what it looks like if you have an unhealthy instance.

Key Issues

Although EC2 alleviates lot of pain out of setting up a load balanced solution there are a few issues you need to be aware of before diving headfirst into this solution.

Connection Timeout Limit

At present the load balancer does not hold connections open for more than 60 seconds by design. So if you have any requests which take longer than a minute you are going run into problems. If you do find yourself in this position you should really be looking at how to reduce the Response times by using one-way Messaging or reducing the operation.

Static IP Address Support

Unfortunately there is currently no support for a Static IP address for your load balancer. So if you are integrating with third parties who have strict firewall policies then you may have problems. I’m hoping though that Amazon add this feature in the future as it is needed in a lot of scenarios.

SSL Support

To enable HTTPS you have to use port forwarding at the load balancer level, which can be achieved by listening on 443 and then forwarding to another port for instance 8443.

In IIS you then need to change the port in the Site Bindings, and don’t forget to open 8443 on your Firewall.

Sticky Sessions

The Elastic Load Balancer does support Sticky Sessions via Cookies or QueryString however it is only works for Port 80 traffic.

So if you’re using InProcess Session State you will need to move to the SQL based provider.

Cache

If you’re using System.Web.Caching.Cache to cache objects then you’re also going to run into issues because of the Sticky Session problem on HTTPS. If Caching is a critical factor in your applications performance then you will need to consider a Distributed Cache solution like Memcache or Shared Cache.

Well that’s about all there is to it. I hope this helps someone.

Till next time.